In recent years, AI has been utilized in various scenes of daily life, and one of the core technologies behind it is the neural network. By understanding neural networks, we can gain a deeper insight into the potential and application scope of AI.

This article provides a comprehensive explanation of the mechanism of neural networks, their relationship with machine learning and deep learning, and examples of their use. If you are considering implementing AI in your company, please read through to the end.

What is a Neural Network?

A neural network is a type of AI technology designed to mimic the neural circuitry of the human brain. Computational units called nodes are arranged in layers, transmitting information to each other to perform complex processing.

This enables AI to learn patterns from vast amounts of data and perform advanced tasks such as image recognition and voice recognition. While it was difficult for conventional programs to process ambiguous data or make predictions, the advent of neural networks has made these tasks efficient.

Recently, neural networks have been utilized in various fields such as medicine, finance, and entertainment, attracting attention as a technology that greatly expands the possibilities of AI.

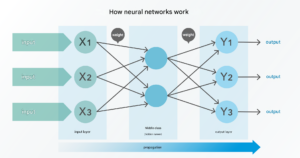

The Mechanism of a Neural Network

The mechanism of a neural network consists of three elements:

Input

Propagation

Output

First, data is fed into the input layer, where information such as images or numerical values is represented in numerical form. Subsequently, propagation occurs through intermediate layers called hidden layers, where each node performs weighted calculations.

The results of these calculations are then transmitted to the next layer, and finally, the output layer generates a result. For example, in image recognition, it outputs a judgment such as “Is this image a dog or a cat?”

Through these processes, the neural network learns patterns in data and becomes capable of handling complex tasks. Neural networks are a crucial point in AI utilization, so it’s important to understand the general flow of how they work.

Relationship with Machine Learning and Deep Learning

Terms often confused with neural networks include machine learning and deep learning. Let’s take a closer look at the relationship between each.

Neural Networks and Machine Learning

Machine learning is a fundamental AI technology that learns patterns from data to make predictions and classifications, and it is used as a term referring to algorithms in general. On the other hand, a neural network is a specific method within machine learning, possessing a structure designed to mimic the neural circuits of the human brain.

In other words, a neural network can be considered one of the tools within machine learning. While machine learning includes a wide range of methods, from simple linear models to complex non-linear models, neural networks are particularly well-suited for large-scale data and complex tasks.

Thus, if machine learning is the broad framework of AI, then neural networks represent a specialized, advanced approach within it. Both machine learning and neural networks are AI technologies, but they are strictly distinguished as different concepts, so it’s important to understand the difference.

Neural Networks and Deep Learning

Deep learning can be described as a further evolution of neural networks. Specifically, deep learning is a technology that uses neural networks with many layers to enable learning of more complex and advanced patterns.

This multi-layered structure has enabled deep learning to achieve dramatic progress in fields such as image recognition, voice recognition, and natural language processing. In other words, deep learning is a deep version of neural networks, built upon the foundation of the neural network framework.

Understanding this relationship—where neural networks form the basis of deep learning, and deep learning expands its applications—will allow you to grasp their respective roles more clearly.

Learning Patterns of Neural Networks

How does learning proceed in a neural network? This chapter explains the learning patterns of neural networks by dividing them into two types.

Supervised Learning

Supervised learning is the most common method among neural network learning patterns.

Specifically, it involves giving the model pairs of input data and correct labels (answers), training it to predict the correct output. For example, by showing a photo and telling the AI “this is a dog,” the model learns to judge “this is a dog” when it sees a new photo later.

Here are examples of supervised learning applications:

Image recognition: Identifying animals like cats and dogs, or objects like cars and buildings.

Voice recognition: Analyzing human voices and converting them into text.

Medical diagnosis: Detecting lesions from X-ray images.

A major appeal of supervised learning is its ability to achieve high accuracy in tasks where the correct answer is predetermined. However, it’s important to note that it requires the cost of preparing labeled data.

Unsupervised Learning

Unsupervised learning is a learning method used when correct labels do not exist.

In unsupervised learning, the model is given a large amount of data and learns to autonomously find the structure and patterns within that data. Analogous to human learning, it’s similar to “inquiry-based learning” where one grasps characteristics by organizing data on their own, without explicit explanations.

Here are examples of unsupervised learning applications:

Clustering: Grouping customers based on purchasing tendencies.

Anomaly detection: Finding outliers that deviate from normal data.

Dimensionality reduction: Simplifying high-dimensional data.

A major advantage of unsupervised learning is its ability to utilize vast amounts of unlabeled data. It proves especially powerful in fields where data labeling is difficult or prior knowledge is limited.

Thus, supervised learning and unsupervised learning are approaches for different types of problems. Therefore, it is important to choose the optimal learning method according to your company’s objectives and the characteristics of your data.

Representative Types of Neural Networks

Did you know that even though we simply say “neural network,” there are actually various types? This chapter introduces representative types of neural networks.

CNN (Convolutional Neural Network)

CNN, also called a “convolutional neural network,” is a type of neural network specialized for image processing. It primarily extracts features from image data and performs classification and recognition based on those features.

A key characteristic of CNN is the mechanism called the convolutional layer. The convolutional layer plays the role of extracting features from image data, capturing pixel information locally to extract edges, patterns, and more.

Typical use cases for CNN include object recognition (classifying cats, dogs, etc.), autonomous driving (recognizing road signs and lanes), and the medical field (detecting lesions from MRI images). As such, CNN is widely used as an indispensable technology in projects handling image data.

RNN (Recurrent Neural Network)

RNN, also called a “recurrent neural network,” is a model specialized for learning the order of data or temporal sequences. A major feature of RNN is its recurrent structure, which allows it to memorize past information while making subsequent predictions.

This mechanism enables RNN to efficiently handle time-series data or sequence data. However, when learning long sequences, the vanishing gradient problem can occur, so caution is needed.

The vanishing gradient problem refers to an issue where, during the neural network learning process, the gradient (differential value) becomes extremely small when propagating errors to update weights, causing learning to stall as layers become deeper.

Typical use cases for RNN include voice recognition (analyzing continuous sounds), translation (generating sentences considering word order), stock price prediction, and weather data analysis. Thus, RNN is an effective option for tasks that involve a temporal dimension.

LSTM (Long Short-Term Memory)

LSTM is an extended model developed to overcome the vanishing gradient problem, a weakness of RNNs. As mentioned, the vanishing gradient problem is a phenomenon where the gradient carrying the error during learning gradually diminishes as it propagates back through layers, preventing deep layers from learning and hindering model accuracy.

LSTM maintains an internal memory cell, allowing it to retain information over long periods while discarding unnecessary information as needed. This enables efficient learning even with long sequence data. A key feature of LSTM is its ability to handle more advanced tasks, such as generating text while considering the overall context of a sentence.

Typical use cases for LSTM include natural language processing (chatbots, text generation), music generation (composing considering melodic structure), and biometric data analysis (analyzing heart rate or brainwave patterns). Thus, LSTM is effective for tasks that require capturing long-term dependencies.

Autoencoder

An autoencoder is a neural network specialized for compressing input data and then reconstructing new data based on that compressed representation. Autoencoders are often used primarily to extract essential features of data and are positioned as a type of unsupervised learning.

A typical autoencoder consists of two components: an “encoder” and a “decoder.” The encoder transforms data into a latent space, and the decoder recreates the input based on that latent representation. A major characteristic of autoencoders is their ability to utilize this mechanism for tasks like data denoising and anomaly detection.

Use cases for autoencoders include data compression (efficient storage of image data), noise removal (restoring old photos or audio), and anomaly detection (detecting patterns deviating from normal data). Thus, using an autoencoder is a good approach when you want to grasp the characteristics of your data.

We have introduced four types of neural networks here, but their features and areas of expertise are diverse. Therefore, by appropriately choosing the type of neural network according to the application, you can maximize the effectiveness of AI utilization.

Points to Consider When Using Neural Networks

While neural networks are indispensable for AI utilization, there are several key points to be aware of when using them. This chapter introduces three important considerations.

Ensure Data Quality and Quantity

Neural networks achieve high accuracy by learning patterns from large amounts of data. However, if data is insufficient or contains significant noise, model performance will degrade substantially. Therefore, it is crucial to thoroughly preprocess and clean the data to ensure appropriate quantity and quality.

Implement Measures to Prevent Overfitting

A major characteristic of neural networks is their high flexibility. This flexibility can lead to “overfitting,” where the model fits the training data too closely, resulting in poor generalization performance on unseen data. To prevent this problem, techniques like dropout and regularization methods should be employed, and an appropriate model size must be chosen.

Consider Computational Costs and Resources

Training neural networks requires significant computational resources, and deep models, in particular, demand substantial time and cost. For efficient model development, careful consideration must be given to selecting the hardware environment (GPUs, TPUs) and potentially implementing distributed learning. It is important to plan proactively to avoid project setbacks due to budget or resource constraints.

Conclusion

This article has explained the mechanism of neural networks, their relationship with machine learning and deep learning, and examples of their application.

By utilizing neural networks, companies can leverage them in various business scenarios. Revisit this article to solidify your understanding of the mechanisms and representative types of neural networks.

Follow us on Facebook for updates and exclusive content! Click here: Maga AI